Most everyone has heard of large language models, or LLMs, since generative AI has entered our daily lexicon through its amazing text and image generating capabilities, and its promise as a revolution in how enterprises handle core business functions. Now, more than ever, the thought of talking to AI through a chat interface or have it perform specific tasks for you, is a tangible reality. Enormous strides are taking place to adopt this technology to positively impact daily experiences as individuals and consumers.

But what about in the world of voice? So much attention has been given to LLMs as a catalyst for enhanced generative AI chat capabilities that not many are talking about how it can be applied to voice-based conversational experiences. The modern contact center is currently dominated by rigid conversational experiences (yes, Interactive Voice Response or IVR is still the norm). Enter the world of Large Speech Models, or LSMs. Yes, LLMs have a more vocal cousin with benefits and possibilities you can expect from generative AI, but this time customers can interact with the assistant over the phone.

Over the past few months, IBM watsonx development teams and IBM Research have been hard at work developing a new, state-of-the-art Large Speech Model (LSM). Based on transformer technology, LSMs take vast amounts of training data and model parameters to deliver accuracy in speech recognition. Purpose-built for customer care use cases like self-service phone assistants and real-time call transcription, our LSM delivers highly advanced transcriptions out-of-the-box to create a seamless customer experience.

We are very excited to announce the deployment of new LSMs in English and Japanese, now available exclusively in closed beta to Watson Speech to Text and watsonx Assistant phone customers.

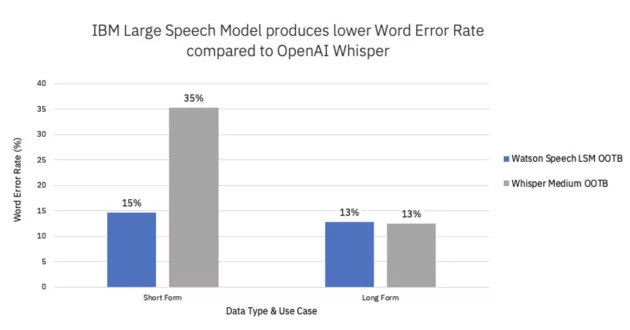

We can go on and on about how great these models are, but what it really comes down to is performance. Based on internal benchmarking, the new LSM is our most accurate speech model yet, outperforming OpenAI’s Whisper model on short-form English use cases. We compared the out-of-the-box performance of our English LSM with OpenAI’s Whisper model across five real customer use cases on the phone, and found the Word Error Rate (WER) of the IBM LSM to be 42% lower than that of the Whisper model (see footnote (1) for evaluation methodology).

IBM’s LSM is also 5x smaller than the Whisper model (5x fewer parameters), meaning it processes audio 10x faster when run on the same hardware. With streaming, the LSM will finish processing when the audio finishes; Whisper, on the other hand, processes audio in block mode (for example, 30-second intervals). Let’s look at an example — when processing an audio file that is shorter than 30 seconds, say 12 seconds, Whisper pads with silence but still takes the full 30 seconds to process; the IBM LSM will process after the 12 seconds of audio is complete.

These tests indicate that our LSM is highly accurate in the short-form. But there’s more. The LSM also showed comparable performance to Whisper´s accuracy on long-form use cases (like call analytics and call summarization) as shown in the chart below.

How can you get started with these models?

Apply for our closed beta user program and our Product Management team will reach out to you to schedule a call.As the IBM LSM is in closed beta, some features and functionalities are still in development.

Source: ibm.com

0 comments:

Post a Comment